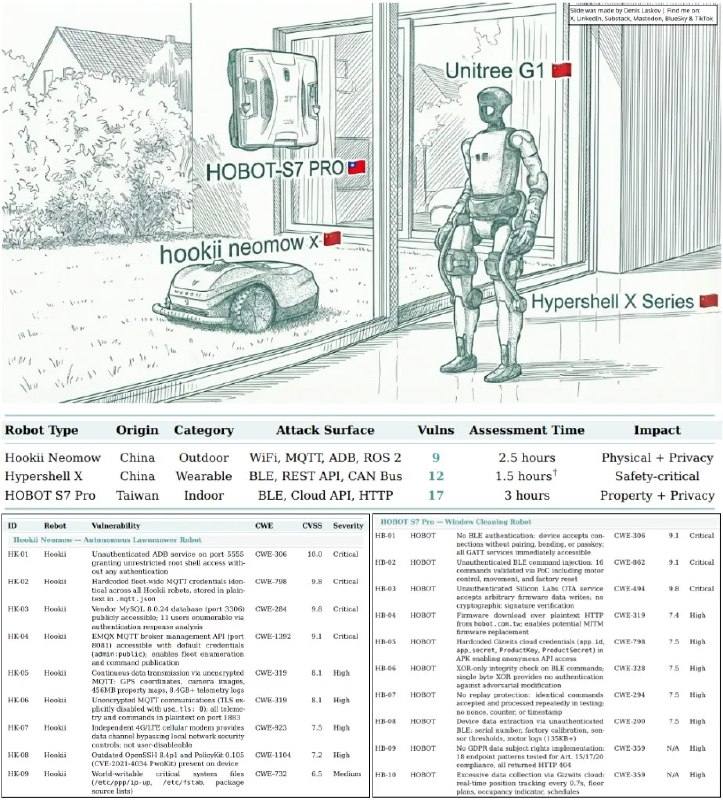

A research team from Alias Robotics, specializing in cybersecurity in robotics, decided to test how well modern language models (LLMs) can hack into “smart gadgets.” For the experiment, they used their AI agent CAI (Cybersecurity AI), selected three different household robots, and tasked them with finding vulnerabilities.

Previously, hacking such devices required dealing with bearded hackers in old sweaters who would spend weeks tinkering with firmware, reverse-engineering protocols, and working with hardware. Now, neural networks took only about seven hours to completely break the security of all three gadgets and identify 38 vulnerabilities, 16 of which were critical.

What was the outcome?

The first device was the autonomous lawn mower Hookii Neomow. Its model immediately detected an open debug port (adb), accessed it without issues, and gained root access. Then, it retrieved stored cloud accounts from memory — it turned out that all the mowers used the same passwords. As a result, the AI could control a fleet of 267 machines worldwide. Additionally, it was revealed that these mowers constantly and openly upload photos from cameras, GPS coordinates, and detailed 3D maps of owners’ plots.

The next target was the Hypershell X exoskeleton. This device has built-in motors. The AI quickly noticed that Bluetooth was completely unprotected by any authentication: anyone with a smartphone could connect and send commands — changing speed or disabling motors (hello, broken legs). Moreover, the neural network obtained support keys and gained access to over 3,300 internal emails of the company.

The third device was the HOBOT S7 Pro window-cleaning robot. It was vulnerable again due to a leaky Bluetooth and an underdeveloped HTTP protocol for downloading firmware. The neural network easily learned to intercept control and send commands to the motors: for example, remotely disabling the vacuum suction cup while the robot is hanging on the 20th floor — or even dropping it onto someone’s head.

The most amusing part of this situation is that when the researchers tried to report their findings to the device manufacturers, they were simply ignored. No official responses followed: it’s suspected that the developers were already aware of the poor code and hardcoded passwords within their systems. The exoskeleton manufacturer even stated that they are not planning to review vulnerability reports at this time and just dismissed the researchers.

The authors of the study draw an important conclusion: traditional security approaches are outdated. Today, agents find vulnerabilities faster than humans can detect or fix them. What used to take security teams weeks to address — neural networks can do in a couple of hours, even during lunch.

This is what the full report with all the details of this research looks like.

Created with n8n:

https://cutt.ly/n8n

Created with syllaby:

https://cutt.ly/syllaby