🌟 MiniMax-M1: a revolutionary reasoning‑LLM capable of processing up to 1 million tokens of information

MiniMax-M1 is the world’s first open-weight component model that combines a hybrid reasoning‑LLM architecture capable of handling up to 1 million tokens (utilizing 8× DeepSeek R1) with MoE and Lightning Attention technologies. This approach enables the models to operate at peak efficiency compared to similar counterparts.

The number of parameters in this model reaches 456 billion—approximately 45.9 billion are activated for each token, ensuring ultra-high performance in text generation. For example, it consumes only about 25% of the FLOPs of DeepSeek R1 when processing 100,000 tokens, making it highly resource-efficient.

Training was carried out using reinforcement learning based on a new technology called CISPO, specifically designed to solve complex real-world problems—from mathematical calculations to programming. Developing the model cost around $534,000. There are two versions available—with a “thinking budget” of 40K and 80K—allowing for use in different scenarios.

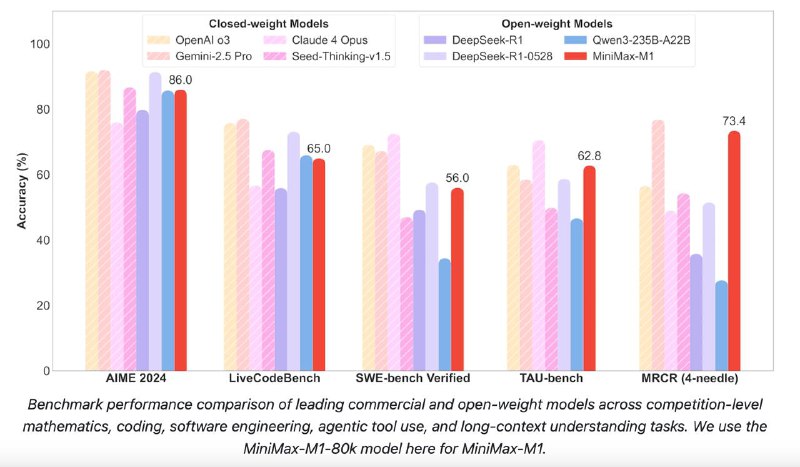

Benchmark results show that MiniMax-M1 surpasses models like DeepSeek R1 and Qwen3-235B, demonstrating superior performance in tasks related to mathematics and programming. It leads in software engineering and reasoning fields.

Below are comparative results on key tests:

– AIME 2024: 86.0 (for the 80K version) versus 85.7 for Qwen3 and 79.8 for DeepSeek R1.

– SWE-bench Verified: 56.0, while Qwen3 scores only 34.4.

– OpenAI-MRCR (128k): 73.4 versus 27.7 for Qwen3.

– TAU-bench (aviastar): 62.0 versus 34.7.

– LongBench-v2: 61.5 compared to 50.1 for Qwen3.

If you wish to try the model yourself, follow the links below:

▪ On Hugging Face: https://huggingface.co/collections/MiniMaxAI/minimax-m1-68502ad9634ec0eeac8cf094

▪ On GitHub: https://github.com/MiniMax-AI/MiniMax-M1

▪ Technical report available here: https://github.com/MiniMax-AI/MiniMax-M1/blob/main/MiniMax_M1_tech_report.pdf

This marks an impressive step forward in reasoning-oriented language models, combining scale, efficiency, and practical applicability.