OpenAI has launched a new version of GPT — now called GPT-5.4, which has significantly improved computer management capabilities and hallucination reduction.

The company is actively integrating GPT-5.4 into all its services: ChatGPT, Codex, API. The model is available in Thinking and Pro variants, but the Fast (Instant) version has not appeared yet — just two days ago, GPT-5.3 Instant was released, designed for simple tasks and chat. But let’s focus on the more substantial updates.

The most important change is that GPT-5.4 has received a powerful internal upgrade within ChatGPT, which previously received less attention. Now it can use the computer use function — meaning it can see the desktop, click the mouse, fill out forms, and perform tasks that were previously unavailable to chatbots. Recalling an experiment with a similar function in Operator, the results were mediocre — this time, OpenAI promises better performance. Let’s see if the model is truly reliable enough for everyday use.

Another interesting innovation is Preamble. When the model spends a long time pondering a complex task, it outputs the main steps of its reasoning in the chat during processing. Users can notice if something is going wrong and immediately insert a prompt or correction during the process. This is especially useful if you’ve forgotten important context or formulated the prompt incorrectly — you don’t have to wait for the final answer; you can intervene mid-process and steer it in the right direction.

OpenAI continues working on reducing hallucinations. GPT-5.2 Thinking already showed good results in this area, and version 5.4 has advanced even further. The evaluation uses two metrics: the first — Individual claims — isolates statements from the response and checks how many are false; GPT-5.4 reduces errors by 33% compared to 5.2. The second — Full responses — the percentage of answers with at least one mistake decreased by 18%.

The context window size has increased to one million tokens — significantly more than GPT-5.2: the API had a limit of 400,000 tokens, and ChatGPT a maximum of 272,000. According to some sources, the limit in ChatGPT remains at 272K with GPT-5.4 — which is somewhat disappointing.

Additionally, OpenAI is actively optimizing context usage: the new model consumes fewer tokens for the same tasks, and tool definitions are loaded only when needed, not all at once. When reaching the limit, a mechanism called compaction activates — it removes unnecessary information from the context, leaving only what’s essential. However, this method doesn’t always work perfectly — competitors like Claude sometimes forget important details after such cleaning.

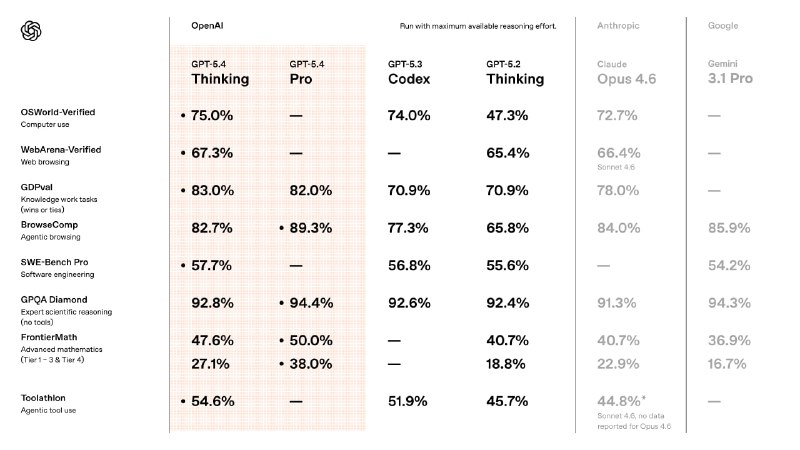

Regarding benchmarks — there are some nuances: GPT-5.4 shows leadership in nearly all tests in both Thinking and Pro versions, but significant improvements are mainly seen in computer interaction — thanks to this feature, new capabilities can be integrated into ChatGPT.

Other metrics show less pronounced growth — compared to GPT-5.2-Thinking, progress is mainly in computer use and automating programming tasks or terminal commands. When compared to competitors like Opus 4.6 or Gemini 3.1 Pro, the increase in GPT-5.4 is only a few percent.

Users online generally respond positively to the new model: especially highlighting GPT-5.4-Thinking — many note that it nearly matches the more expensive Pro variant in most tasks due to high efficiency and quality of results. Opinions on the interface vary: some find it better than Opus or Gemini, while others prefer different visual designs — perception of UI is highly subjective.

Finally, another interesting point: according to The Information, OpenAI is moving to a monthly update schedule for models — between the release of GPT-5.3-Codex and GPT-5.4, exactly one month passed. A similar approach is expected from Anthropic: rumors suggest that new versions of models like Sonnet and Opus are already undergoing testing and will soon be available to the public.

Created with n8n:

https://cutt.ly/n8n

Created with syllaby:

https://cutt.ly/syllaby