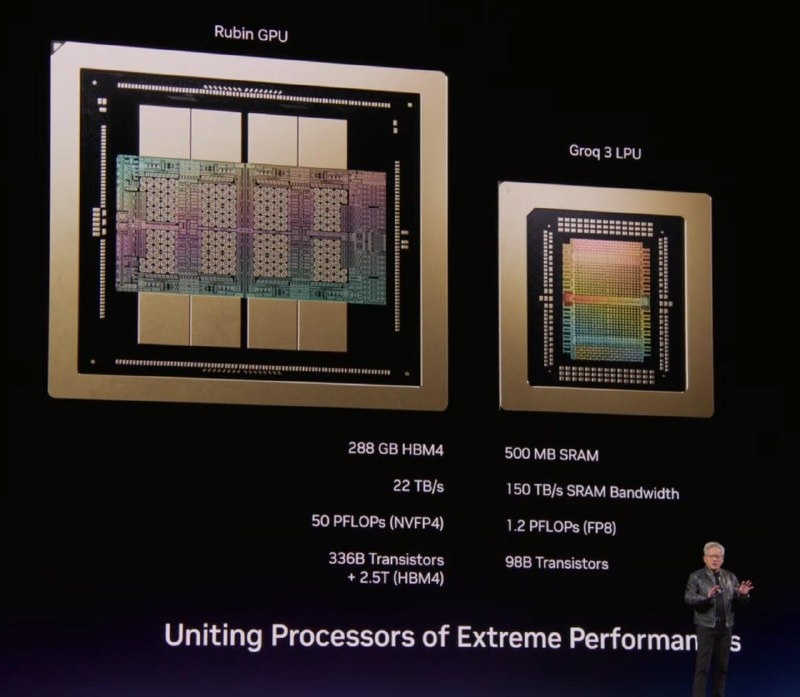

At the GTC conference, opened by Nvidia’s CEO, new technologies and developments are being discussed. This time, gaming graphics cards are not shown — and likely, we shouldn’t expect them in the near future 😭. Instead, attendees were introduced to a new module based on technologies from the recently acquired startup Groq.

Previously, Groq specialized in rapid token generation because their cards did not use HBM — a very fast but quite expensive and inaccessible memory. Instead, all data and tokens were stored in SRAM — ultra-high-speed memory (15 times faster than standard), which interacts directly with the compute blocks. However, such memory is very costly and limited in capacity: for example, the GB200 data center GPU contains only 126 MB of SRAM across two chips (63 MB each).

This was one of Groq’s challenges — they couldn’t run very large models due to SRAM limitations.

Now, the Groq 3 LPX module will become part of Nvidia server racks, specifically designed for scenarios requiring lightning-fast data generation. Nvidia believes that modern GPT models (allegedly with two trillion parameters) will operate at around 400 tokens per second.

In one such LPX rack (as shown in the second image), there will be 128 GB of SRAM — a huge amount compared to standard cards. But even this won’t be enough for processing large models entirely, so Nvidia recommends using it only for components like FFN or MOE — while attention mechanisms continue to run on regular Nvidia cards (see the fourth image).

Another innovation is shown in the last photo: plans for a chip based on the Vera Rubin architecture (the next generation has already been announced but is not yet available for sale), designed specifically for space applications and taking heat dissipation into account.

These are the latest updates!

Created with n8n:

https://cutt.ly/n8n

Created with syllaby:

https://cutt.ly/syllaby