What is happening with OpenClaw right now?

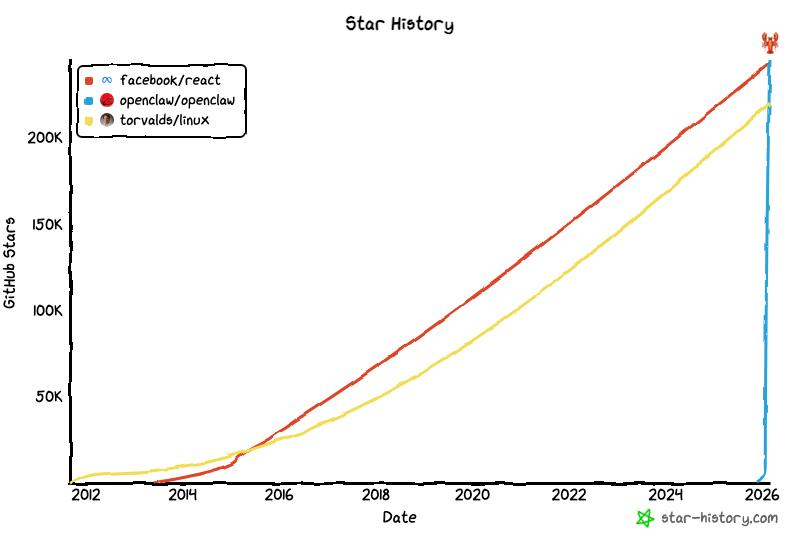

On GitHub, this project already has over 250,000 stars, making it one of the most popular software projects on the platform. Looking at the growth in popularity — ten years ago, Linux or React gathered stars over years, while OpenClaw skyrocketed in a very short period.

Even after the creator of OpenClaw, Peter Steinberger, moved to OpenAI, development did not stop. Updates are released almost daily: they not only fix bugs and improve security but also add new features.

However, there are still certain challenges with applying OpenClaw.

Development is progressing at a very rapid pace

And this development is quite unconventional. Previously, new AI models with fixed interfaces appeared every few months: in the style of ChatGPT — everything was familiar and straightforward.

But with OpenClaw, the interface is dynamic — flexible and multifunctional. You select a model — for example, Opus 4.6, GPT-5.3-Codex, or Kimi K2.5 — and have basic capabilities that expand almost every few days. Additionally, users create their own skills, write guides, and share them. Sometimes, OpenClaw itself generates additional functions tailored to specific tasks.

When all these components start interacting, there is a risk of conflicts. For example, functionality you’ve added may conflict with a recent update of OpenClaw or some new solution you didn’t notice because it appeared recently.

Technologies are still not ready for such load

In my workflow, I usually create a separate chat for each question or task: for example, a separate dialogue for preparing a presentation, and a different project in Claude Code. The agent constantly switches between tasks: discussing an important document — a reminder or clarification request appears; gathering materials for a post — an urgent question about statistics from within OpenClaw comes up.

This quickly creates confusion: multiple parallel tasks clutter the context, and the agent begins to get confused and make mistakes. Even if I decide to work calmly in the evening — suddenly the context overflows, forcing it to compress (compaction) to preserve knowledge. As a result, important details can be lost or previously added functions forgotten.

The biggest problem with OpenClaw is memory loss. For example, I added about ten necessary functions there, but after some time, the agent simply forgets or ignores them. Then I have to remind it manually where instructions are stored or how to perform certain operations.

There are still very few users of OpenClaw

A common joke in recent weeks is to buy a Mac Mini and spend the evening installing and configuring this software, then use it for reminders or news summaries in Telegram. And there is some truth in that: with proper setup on a good model (such as Opus 4.6 or GPT-5.3 Codex), it can come up with solutions for almost any task.

But how reliable this solution will be and whether it’s truly better than traditional methods is the main question. And only you can answer that — because AI agent experts are still not established as a distinct category of specialists. I regularly read guides on working with OpenClaw — even the best ones are “in the dark” and only try to understand the principle on the fly. Maybe they are slightly ahead of me — but they don’t go much further.

How I decided to approach this?

Currently, I use OpenClaw more as a knowledge platform rather than a full-fledged assistant. When I try to solve a new task with its help — I don’t focus on efficiency right away but rather on whether I can actually accomplish something. Many ideas are later transferred to Claude Code without worrying about their reliability. Over time, such agents will become more stable, and I will know exactly how to use them properly.

Additionally, there are several simple procedures:

— Every night, OpenClaw automatically updates the Memory.MD file.

— Once a week, it checks itself for errors and security threats.

— When I was writing this text, I came up with another idea: I wrote a script for OpenClaw to compile a list of key functions and evaluate their effectiveness or find more optimal implementations. It resulted in a list of five points for further improvements.

The main rule is — if something goes wrong, the first step is to ask the agent itself why it happened and how to prevent similar situations in the future. This usually works about half the time (50%), which is already quite good.

I am confident that in six months to a year, such situations will occur in only about 5% of OpenClaw usage — as technologies continue to improve.

Created with n8n:

https://cutt.ly/n8n

Created with syllaby:

https://cutt.ly/syllaby