Imagine an evening lab session at MIT: a graduate student turns off the monitor and heads home, while their large language model-based system continues working autonomously. During the night, it engages in self-reflection — generating new tasks, writing explanations, initiating training, analyzing results, and selecting the most promising directions for further development. By morning, its effectiveness across many tasks has improved as if it had been worked on by an entire team of engineers.

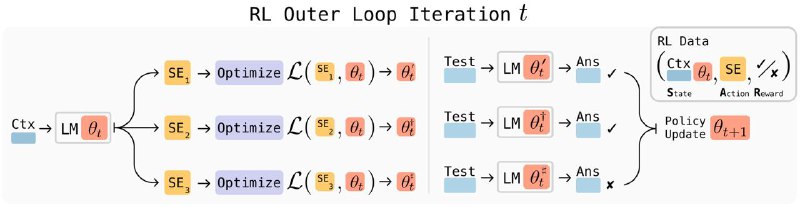

This is the essence of the SEAL method — “Self-Training through Self-Editing” systems. The model autonomously formulates the question “what am I lacking?”, creates a synthetic dataset for training, adjusts its weights, and immediately tests its updates. Correct improvements are rewarded, while mistakes are penalized. When tackling encyclopedic questions, its own notes proved even more useful than GPT-4.1’s materials. The improvement was especially noticeable in puzzle-solving: accuracy increased from zero to 72.5% within just a few self-training iterations.

But what’s the point of all this?

Such an approach to self-education allows a model to go from a hypothesis to a fully improved version overnight, without human intervention. In the future, systems may independently identify their weaknesses and generate new knowledge as needed. This poses a challenge for the industry — the need for standards to monitor “self-training” processes, new reliability metrics, and “stop-loss” mechanisms to prevent night-time experiments from going too far. For developers, it’s an opportunity to accelerate feature development, making updates more continuous and responsive.