How Claude Works Inside: Anthropic Reveals Their Architecture

While some experts with their morning salmon on the plate were discussing whether AI will replace humans, Anthropic has already demonstrated how Claude actually functions. And it turns out, it’s much more interesting than it seems at first glance.

Inside, there is an entire team of agents working in a coordinated system, even without complex configurations like MCP.

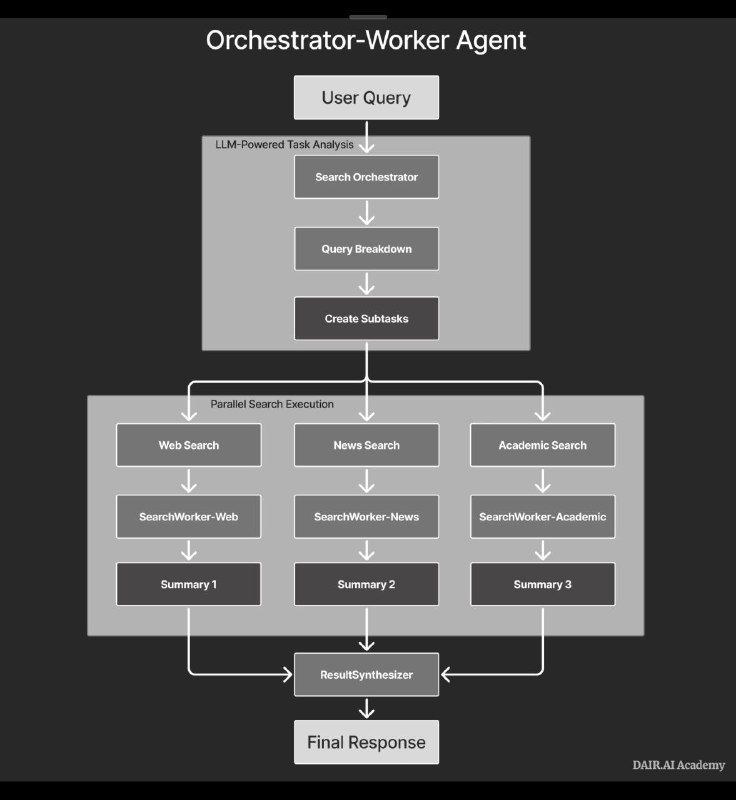

When you ask Claude a complicated question, it doesn’t just “think” like one big brain. Instead, a whole “mafia-like” collective of specialized agents unfolds, organized according to an Orchestrator-Worker scheme.

The main agent acts as a kind of boss, who:

– Analyzes your request and breaks it down into specific tasks

– Creates the necessary executors for each task

– Manages the entire team and compiles the final results

The specialized agents are like smart workers, but highly trained:

– Each equipped with their own set of tools and prompts

– They work simultaneously, without interfering with each other

– For example, SearchWorker-Web, SearchWorker-News, SearchWorker-Academic — depending on the type of information needed

Imagine a situation: you ask, for example, “Provide statistics on the decline in demand for PHP developers.”

Instead of the main agent searching the internet or looking for information itself, it creates three specialists:

– One digs through online sources

– Another analyzes recent news

– The third reads scientific articles

All these agents operate in parallel, and then their results are combined by a special module — ResultSynthesizer — which provides you with a comprehensive answer.

What are the advantages?

All processes occur simultaneously rather than sequentially. Each agent is responsible for its own domain and performs better than a universal one. Moreover, this system can be scaled up to hundreds of agents: if one fails, the others continue working.

The main innovation that sets this approach apart is using Claude for self-optimization. AI learns to improve itself recursively. Additionally, a system for evaluating performance is implemented via LLM-as-judge — when one AI assesses the results produced by another based on clear criteria. It’s important to remember the term — LLM-as-judge: it means employing language models as evaluators, which differs fundamentally from using AI solely as a meta-search engine on a given dataset.

By the way, this architecture can be assembled independently using MCP servers. However, most still tend to create simple wrappers over ChatGPT and wonder why scaling or achieving high efficiency doesn’t work effectively.