A new programming benchmark recently appeared, and the results are disappointing — models show 0% successful solutions 😐

This test is called LiveCodeBench Pro. It includes the most advanced and difficult problems from platforms like Codeforces, as well as from ICPC and IOI olympiads. All tasks were prepared by winners and medalists of these prestigious competitions, so the difficulty level is truly very high.

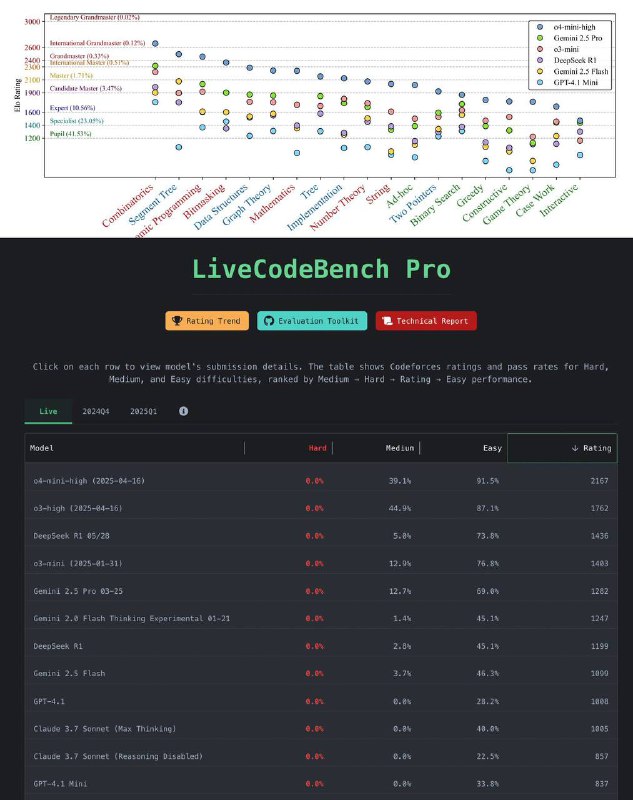

What is the outcome? Even the best models, such as o4-mini-high, reach approximately a 2100 rating — significantly lagging behind human Grandmasters, whose ratings are usually around 2700.

At the same time, the vast majority of AI models are only capable of solving simple and some medium-level tasks. On truly hard problems — zero success. Everything related to complex topics like game theory or handling rare cases — models are completely helpless.

They handle combinatorics and dynamic programming tasks reasonably well, but when it comes to game logic and processing unusual cases — performance is roughly at the level of an average specialist or even a school student.

An interesting point: human errors are often related to implementation — inattentiveness, syntax mistakes, oversight. AI failures usually stem from misunderstandings of the solution idea, that is, at the conceptual level.

Currently, olympiad-level competitors have not been virtually replaced yet. And it seems unlikely that this will change in the near future.