🤖 RoboBrain 2.0 is an innovative artificial intelligence designed for the next generation of robots.

This open model is capable of solving a wide range of tasks—ranging from environmental perception to robot control. Even now, it is being called the foundation of future humanoid machines that will be able to operate in real-world conditions.

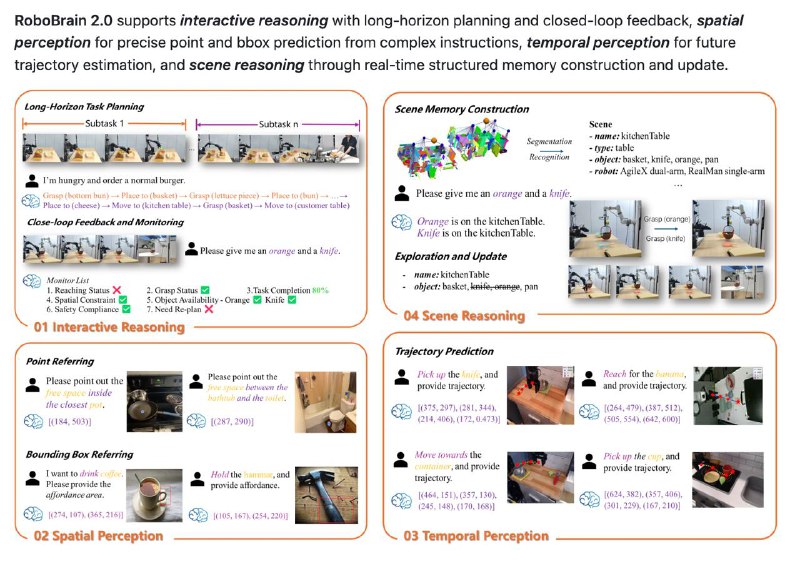

Key features:

– Capable of planning actions, perceiving information, and functioning in the real world;

– Designed for easy integration into real projects and robotic systems—as it has only 7 billion parameters under the hood;

– Fully open for study and further development.

Regarding its architecture, it can:

– Process images, long videos, and high-resolution visual data;

– Understand complex textual commands and instructions;

– Divide input data into visual, processed through Vision Encoder and MLP Projector, and textual, which are converted into a unified token stream;

– All this is fed into the LLM Decoder—a component responsible for reasoning, planning, and determining spatial coordinates and relationships.

Such progress makes mass production of high-tech humanoids quite feasible by 2027.

Now, artificial intelligence is confidently entering the physical world and actively exploring it.

To launch the project, simply run the following commands:

git clone https://github.com/FlagOpen/RoboBrain2.0.git

cd RoboBrain

# Create and activate a virtual environment

conda create -n robobrain2 python=3.10

conda activate robobrain2

pip install -r requirements.txt

Additional resources on the project:

github.com/FlagOpen/RoboBrain2.0

huggingface.co/collections/BAAI/robobrain20-6841eeb1df55c207a4ea0036/

This area is rapidly evolving and opening new horizons in robotics and artificial intelligence.