The Chinese laboratory BAAI has introduced a new open model that is leading in the robotics field.

RoboBrain 2.0 is a universal solution for robot management, which can be called not just a video decoder, but a true “brain” for machines. This system is capable not only of perceiving the environment but also of thinking, planning long-term actions, navigating in three-dimensional space, and analyzing scenes using specialized mechanisms. Its memory accumulates information and is constantly updated over time.

The model is based on two components: Vision Encoder and MLP Projector. They enable processing various input data—videos, photos, and text—all passing through adapters and reaching the latent decoder of the LLM, which performs the required tasks.

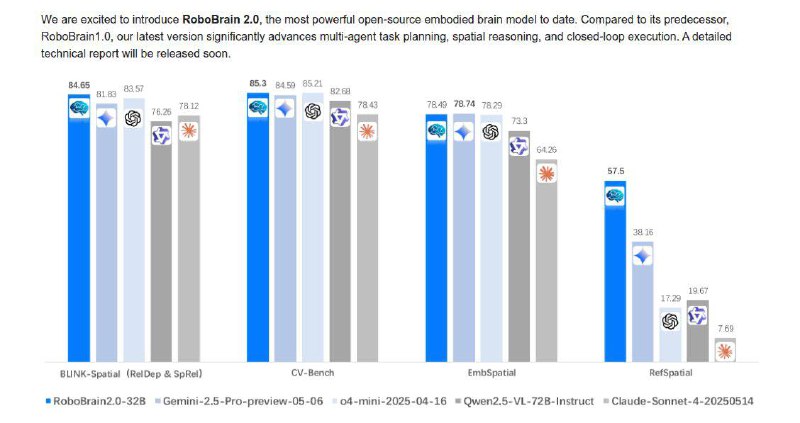

Currently, the model is available in a 7V version, with a plan to release an update at 32V soon. According to tests on robotics benchmarks, this model demonstrates excellent performance, surpassing both open and commercial competitors, including Claude Sonnet 4 and o4-mini.

It is encouraging to see renewed interest in open robotics development, providing opportunities for communities of professionals and enthusiasts to advance the technology.

The entire project is published on GitHub and HuggingFace, making it accessible to a broad audience.